Digital transformation for SMEs: when the wrong sequence leads to the failure of AI projects

We are seeing more and more SMEs investing in artificial intelligence: new tools, pilot projects, motivated teams. On paper, everything is in place for success. And yet, in reality, many AI projects do not deliver the expected results.

The problem rarely stems from the "idea of AI" or the technology itself. The real problem, which is much more subtle, is the order in which decisions are made. We start by choosing a tool, we embark on implementation, and then we realize far too late that we never really clarified why we were doing it, for whom, with what limitations, and with what governance.

In this article, we will look at how a bad sequence can derail your AI projects, and how to get things back on track to turn AI into a real lever rather than a costly disappointment.

Why the order of decisions matters so much

In many SMEs, digital transformation is experienced under pressure:

- market pressure

- pressure from comparisons with other companies

- pressure from executives who want to "do something with AI"

The result: we quickly look for a tool. We sign a contract, launch a pilot program, provide training, and push for adoption. At the time, it all seems logical. But if the foundation isn't clear, the tool only amplifies the existing confusion.

The forgotten questions that come back to haunt you

Before choosing an AI solution, it is important to clarify some simple questions that are rarely addressed in depth:

- Who actually decides what in this project?

- Why are we doing this, beyond the buzz surrounding AI?

- For what specific purpose, in which business process?

- What are realistic goals... and acceptable limits?

- What are our current capabilities: data, team, time, budget?

- What is possible today with AI... and what is not yet possible?

The real cost: fatigue and loss of confidence

A failed AI project is not just a wasted technical expense. The real cost lies elsewhere:

- Organizational fatigue: teams are growing weary of digital projects that change everything... without delivering.

- Skepticism: each new project is met with an increasingly cold "we'll see."

- Doubts among leaders: confidence in the ability to execute is eroding.

- Domino effect: it is not only the AI project that suffers, but all digital initiatives.

In many organizations, after one or two poorly sequenced projects, you hear people say, "AI doesn't work for us." In reality, it's not AI that doesn't work. It's the way it's deployed.

Concrete example in an SME

Imagine a small business providing professional services that decides to implement an AI tool to automate part of its customer relations. The tool is chosen quickly because a supplier gave a good demonstration. The contract is signed, the tool is configured, and it is activated.

A few weeks later:

- customer data is incomplete or poorly structured;

- AI-generated responses do not reflect the company's tone;

- teams feel deprived of their professional judgment;

- Customers receive inconsistent messages.

The conclusion is then drawn that "the tool is not good." But the real problem is that data governance, decision-making roles, and expectations regarding the outcome had never been clarified before the solution was chosen.

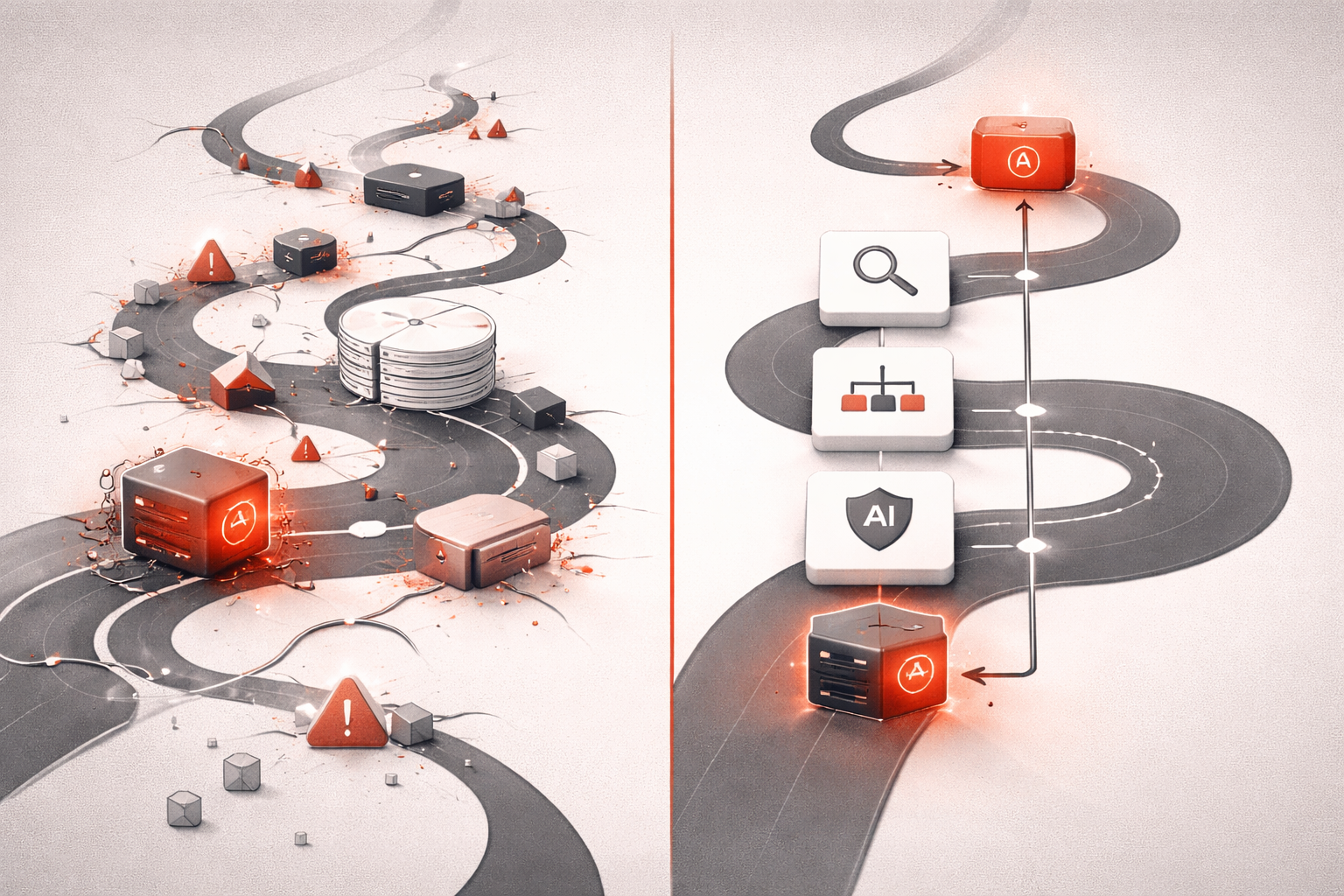

Rethinking the sequence: decision → structure → governance → tool

For an AI project to become a lever rather than a source of fatigue, the usual logic must be reversed. Instead of:

Tool → Pilot project → Training → Adoption

we are looking for:

Clear decision → Structure → Governance → Tool → Implementation

1. Clear decision

The aim is to clarify:

- the business problem to be solved;

- the expected value for the SME (gains, risks avoided, time saved);

- the indicators that will allow us to say "yes, it's working."

2. Structure

We then define how the project will fit into the reality of the company:

- processes affected;

- necessary data and their current quality;

- teams involved and real time available;

- interactions with existing systems.

3. Governance

Governance refers to all the rules governing the AI project:

- who can use the tool and in what contexts;

- What validations are necessary before trusting the results?

- how deviations or errors are monitored;

- how we maintain a clear source of truth for data and decisions.

4. Tool... finally

Once the decisions, structure, and governance are in place, choosing a tool becomes much simpler. The tool is evaluated not on the basis of the most spectacular demo, but on its ability to:

- support the defined process;

- comply with the agreed governance;

- integrate seamlessly with existing systems;

- remain understandable to the teams.

AI as a test of decision-making maturity

A useful perspective is to view AI not as a starting point, but as a test of decision-making maturity.

If the organization fails to:

- clarify why she wants an AI project;

- describe the expected results;

- accepting limitations and risks;

- establish minimal governance;

... so AI will mainly highlight this lack of maturity. It won't create chaos, it will reveal it.

Conclusion: slow down to move forward more effectively

- A failed AI project is not proof that AI "doesn't work" in your SME.

- In many cases, it is the sequence of decisions that is at issue, not the technology.

- By regaining control over the order: decision → structure → governance → tool, you greatly increase your chances of success.

CTA: Subscribe to my newsletter to receive practical content on digital transformation in SMEs, and contact me if you want to clarify the sequence of your upcoming AI projects.